|

IF YOU ARE HAVING A PROBLEM

- Take a look at the logs in

C:\Program Files\CodeProject\AI\logs and see if there's anything in there that screams 'something broke'.

- Check the FAQs in the CodeProject.AI Server documentation

- Make sure you've tested the server using the Explorer (blue link, top middle of the dashboard) to ensure it's a server issue rather than something else such as Blue Iris or another app using CodeProject.AI server.

- If there's no obvious answer, then copy and paste into a message the contents of the System Info tab, describe what you are doing, and what you see, and what you would expect.

Always include a copy and paste from the System Info tab of the dashboard. It gives us a ton of info on your setup. If an individual module is failing, click the 'Info' button to the right of the module's name in the status list and copy and paste that info too.

How to reinstall a module

Option 1. Go to the install modules tab on the dashboard and try re-installing the package. Make sure you have enough disk space and a reliable internet connection.

Option 2: (Option 1 with a vengeance): If that fails, head to the module's folder ([app root]\modules\module-id), open a terminal in admin mode, and run ..\..\setup. This will force a manual reinstall using the install script.

Docker: In Docker you will need to open a terminal into the docker container. You can do this using Docker Desktop, or Visual Studio Code with the Docker remote extension, or on the command using using docker attach. Then do a cd /app/modules/module-id where module-id is the id of the module you need to resinstall. Next, run sudo bash ../../setup.sh --verbosity info to force a manual reinstall of that module. (Set verbosity as quiet, info or loud to get less or more info)

cheers

Chris Maunder

modified 18-Feb-24 15:48pm.

|

|

|

|

|

If you are a Blue Iris user and you are using custom models, then you would notice that the option, in Blue Iris, to set the custom model location is greyed out. This is because Blue Iris does not currently make changes to CodeProject.AI Server's settings. It can be done by manually starting CodeProject.AI with command line parameters (not a great solution), or editing the module settings files (a little messy), or setting system-wide environment variables (way easier). For version 1.6 we added an API to allow any app to change our settings programmatically, and we take care of stopping/restarting things and persisting the changes.

So: Blue Iris doesn't currently change CodeProject.AI Server's settings, so it doesn't provide you a way to change the custom model folder location from within Blue Iris.

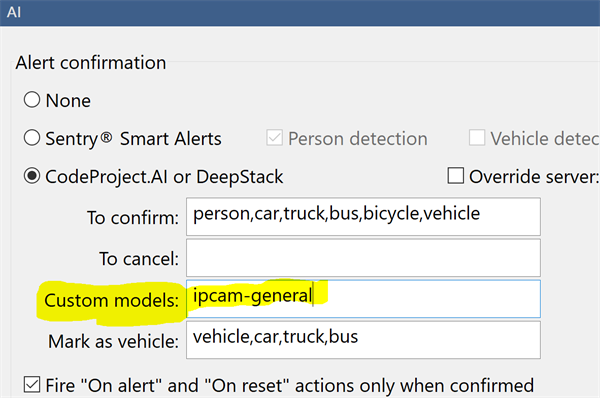

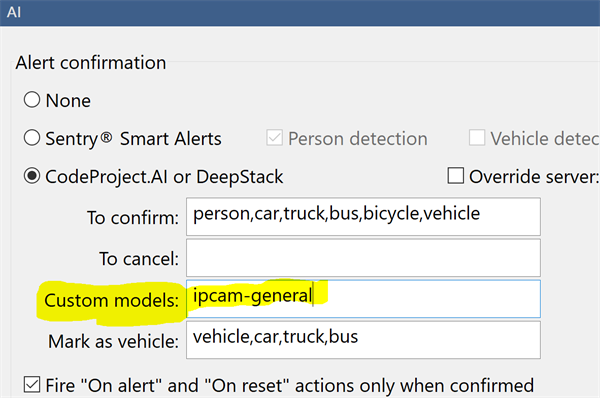

Blue Iris will still use the contents of this folder to determine the calls it makes. If you don't specify a model to use in the Custom Models textbox, then Blue Iris will use all models in the custom models folder that it knows about.

Here we've specified a specific model to use. The Blue Iris help file explains more about how this works, including inclusive and exclusive filters on the models it finds.

CodeProject.AI Server doesn't know about Blue Iris' folder, so it can't tell what models it may be expected to use, nor can it tell Blue Iris about what models CodeProject.AI server has available. Our API allows Blue Iris to get a list of the AI models installed with CodeProject.AI Server, and also to set the folder where these models reside. But Blue Iris doesn't, yet, use that API.

So we do a hack.

At install time we sniff the registry to find where Blue Iris thinks the custom models should be. We then make empty copies of the models that we have, and copy them into that folder. If the folder doesn't exist (eg you were using C:\Program Files\CodeProject\AI\AnalysisLayer\CustomObjectDetection\assets, which no longer exists) then we create that folder, and then copy over the empty files.

When Blue Iris looks in that folder to decide what custom calls it can make, it sees the models, notes their names, and uses those names in the calls. CodeProject.AI Server has those models, so when the calls come through we can process them.

Blue Iris doesn't use the models. It uses the list of model names.

If you have your own models in the Blue Iris folder

You will need to copy them to the CodeProject.AI server's custom model folder (by default this is C:\Program Files\CodeProject\AI\AnalysisLayer\ObjectDetectionYolo\custom-models)

If you've modified the registry and have your own custom models

If you were using a folder in C:\Program Files\CodeProject\AI\AnalysisLayer\CustomObjectDetection\ (which no longer existed after the upgrade, but was recreated by our hack) you'll need to re-copy your custom model into that folder.

The simplest solutions are:

- Modify the registry (Computer\HKEY_LOCAL_MACHINE\SOFTWARE\Perspective Software\Blue Iris\Options\AI, key 'deepstack_custompath') so Blue Iris looks in

C:\Program Files\CodeProject\AI\AnalysisLayer\ObjectDetectionYolo\custom-models for custom models, and copy your models into there.

or

- Modify

C:\Program Files\CodeProject\AI\AnalysisLayer\ObjectDetectionYolo\modulesettings.json file and set CUSTOM_MODELS_DIR to be whatever Blue Iris thinks the custom model folder is.

cheers

Chris Maunder

|

|

|

|

|

On the latest CPAI and using a Coral USB, CPAI at some point switches to using the computer's CPU instead of the Coral. Blue Iris continues to work, but obviously my computer's CPU usage goes up and the response times are greatly increased.

After a few days of running CPAI with the Coral, I noticed that I hadn't had to restart the CPAI docker in quite a while (usually, the Coral either stops responding silently or shuts down explicitly a couple of times every single day). I was suspicious at this "success" and checked, and saw that CPAI was running the detections on my computer's CPU. The status still said "CPU (TF-Lite)" which it says even when the Coral is working, but the detections were taking ~88ms instead of the usual ~13ms.

As with every other problem with the Coral, this was temporarily remedied by me manually restarting the CPAI docker.

|

|

|

|

|

USB Coral? Windows host?

cheers

Chris Maunder

|

|

|

|

|

Just updated to 2.6.2 docker

After some time log get flooded with:

13:26:18:detect_adapter.py: WARNING ⚠️ NMS time limit 0.550s exceeded

13:26:28:detect_adapter.py: WARNING ⚠️ NMS time limit 0.550s exceeded

13:27:25:detect_adapter.py: WARNING ⚠️ NMS time limit 0.550s exceeded

13:27:30:detect_adapter.py: WARNING ⚠️ NMS time limit 0.550s exceeded

It didnt do that ever before.

How do I fix this?

|

|

|

|

|

What module and hardware?

|

|

|

|

|

Thanks very much for your report. Could you please share your System Info tab from your CodeProject.AI Server dashboard?

Thanks,

Sean Ewington

CodeProject

|

|

|

|

|

You're using Coral, right? USB or PCIe?

This error occurs when you're using the YOLOv5 models in Coral, and the non-max suppression method takes too long to sort through all the bounding boxes identified in an image.

What hardware are you running the docker container on, and if possible, could you post an example of an image that's causing this?

cheers

Chris Maunder

|

|

|

|

|

Been running 2.6.2 since it's release with YOLOv8 v1.4.3 and FaceProcessing v1.10.2 as well.

Then I restarted the server that runs the Docker environment. Upon restart it keep telling me:

An item with the same key has already been added. Key: FaceProcessing.

I searched all the AppModules for extra FaceProcessing but nothing...

The only thing that would allow me to start CodeProject was to rename the folder FaceProcessing under

C:\ProgramData\CodeProject\AI\docker\modules

Which I bond with the way I run the docker:

docker run --name cuda12_2-2.6.2 -d -p 32168:32168 --gpus all --mount type=bind,source=C:\ProgramData\CodeProject\AI\docker\data,target=/etc/codeproject/ai --mount type=bind,source=C:\ProgramData\CodeProject\AI\docker\modules,target=/app/modules codeproject/ai-server:cuda12_2-2.6.2

This was a clean install, meaning nothing was in the ProgramData\CodeProject\AI\Docker folder until I installed YOLOv8 and Upgraded FaceProcessing. Those were put there on the install.

|

|

|

|

|

This is an issue where you have a module pre-installed then you update or reinstall and it appears in a different folder. This has been fixed in our next release due next week

cheers

Chris Maunder

|

|

|

|

|

Understanding that the YoloV5 model likes to detect house plants and tooth brushes in my backyard, how do I detect all things? Currently in BlueIris under Camera Settings -> Alert -> AI configuration I have "To confirm" set to the default of "person,car,truck,bus,bicycle,vehicle". But I know there is a lot of other stuff, like dog cat... Toothbrush. Can I set "To confirm" to a wild card?

modified yesterday.

|

|

|

|

|

You can just leave it blank and it will detect all the objects that the model detects

|

|

|

|

|

i recently installed codeproject-ai and pass my coral usb stick through to the docker container. i've downloaded the coral usb module within the cpai interface, but i'm not sure how to make sure all other installed models are running through my coral stick and not cpu.

is there a way to verify this?

|

|

|

|

|

Running Codeproject AI 2.6.2 with object detection (Coral) 2.2.2. And when I watch in the log I get a lot of the following warnings:

16:27:48:objectdetection_coral_adapter.py: WARNING:root:No work in 60.0 seconds, watchdog shutting down TPUs.

16:27:53:objectdetection_coral_adapter.py: WARNING:root:No work in 60.0 seconds, watchdog shutting down TPUs.

16:27:58:objectdetection_coral_adapter.py: WARNING:root:No work in 60.0 seconds, watchdog shutting down TPUs.

16:28:03:objectdetection_coral_adapter.py: WARNING:root:No work in 60.0 seconds, watchdog shutting down TPUs.

16:28:08:objectdetection_coral_adapter.py: WARNING:root:No work in 60.0 seconds, watchdog shutting down TPUs.

16:28:13:objectdetection_coral_adapter.py: WARNING:root:No work in 60.0 seconds, watchdog shutting down TPUs.

16:28:18:objectdetection_coral_adapter.py: WARNING:root:No work in 60.0 seconds, watchdog shutting down TPUs.

What causes this? and how can I fix this?

|

|

|

|

|

It should be booting itself back up as soon as new work arrives, so it shouldn’t be a problem.

|

|

|

|

|

But detection time seems to be a bit slower now, 15-20ms before vs 25ms now? That is really not that much slower but the constant red in the status window makes it seem like something bad is happening.

|

|

|

|

|

In the Blue Iris when a new trigger arrives I get an "AI: error 500". This also happens since the last update. It looks like it needs to wake-up the coral and then has a timeout error.

|

|

|

|

|

Hi,

The majority of the time, even with clear view & multiple reeds showing the same plate (I send images to the server) it reads as an I when should be the number 1

HW13 PBX reported as HWI PBX

As this all goes on in my python program I could program myself to adjust but would be nice if it was a built in feature, perhaps either a country flag or even a template XX##XXX

I know uk private plates might cause issues but better to have the majority.

previously I used paid version of Plate Recognizer (parkpow) which was extremely accurate & had country settings but always got the I & 1 correct but oddly O & 0 mixed up fairly often.

Thanks I/A

|

|

|

|

|

I am building out a large Blueiris setup with 30X 8K cameras. What specific hardware should I keep in mind for Codeproject AI? RAM, GPU, VRAM?

I am looking at running the YOLOv8x model.

Thanks!

|

|

|

|

|

The RTX 3060 12GB would be a good GPU

|

|

|

|

|

Should I care more about card generation GTX 1XXX, RTX 20XX, 30XX ,40XX? Or VRAM?

|

|

|

|

|

|

An nvidia 3050 6Gb would be preferable to an Nvidia Tesla P40 24GB?

Both cards are at the same price point and very similar compute performance. The 3050 is rated around 50w depending of flavor. The P40 wants around 250w. The 3050 has 6Gb~8Gb of VRAM vs 24Gb in the P40.

|

|

|

|

|

YOLOv8 medium is only 100 MB in size, so VRAM shouldn’t be a huge issue until you’re training a new model or running more than a handful of models at a time.

At least in theory. Mike is gonna have better advice in practice.

|

|

|

|

|

I run 25 4-8 megapixel cams on a RTX A5000 VM with 8GB. The card is bored out of its RAm at 1%-5% utilization.

|

|

|

|

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin