|

Yolov5 works fine with CPU and .net. But I am not aware of any way to get it working with coral and cpai. I tried updating the modelsettings.json, but the values in there seem to be ignored. (Not too sure how the GUI is updating these settings).

I'm not convinced it's a kernel setting issue as it's on windows. Not too convinced it's hardware either as the other models work ok eventually, after a fair bit of rebooting and resetting etc. Well, it may be hardware, but I don't think it's the cause of the issue all the time.

I often get that same "IndexError: list index out of range" with multi tpu enabled. I suspect that is the main issue.

Without the custom mikelud ipcam models I'm not sure there's even any point in trying to get it working just yet.

edit; I finally managed to get it supposedly working with YOLOv8. I had to do a clean install and "prime" the TPU with the other model types first then it seemed to work. But I am a little suspicious that it is not really using YOLOv8 as the detection percentages etc are identical to the mobilenet ssd. I have a feeling there are a few bugs with switching. I notice that whenever you shutdown the coral module it states:

21:16:38:objectdetection_coral_adapter.py: Object Detection (Coral) started.

21:16:38:objectdetection_coral_adapter.py: Using model mobilenet ssd, size medium

21:16:38:Module ObjectDetectionCoral has shutdown

even though you are shutting it down, and are using a different model.

modified 22-Feb-24 6:21am.

|

|

|

|

|

I don't know, when i try to put it in hudge it tells me it is incompatible with my GPU (RTX3070).

You think YOLOv8 is better than v6.2 ?

|

|

|

|

|

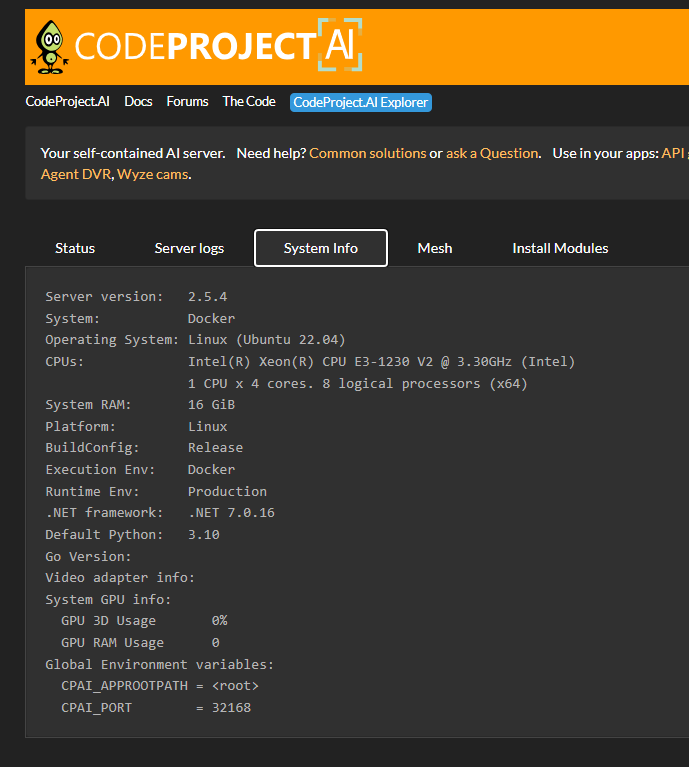

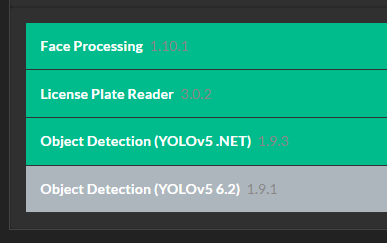

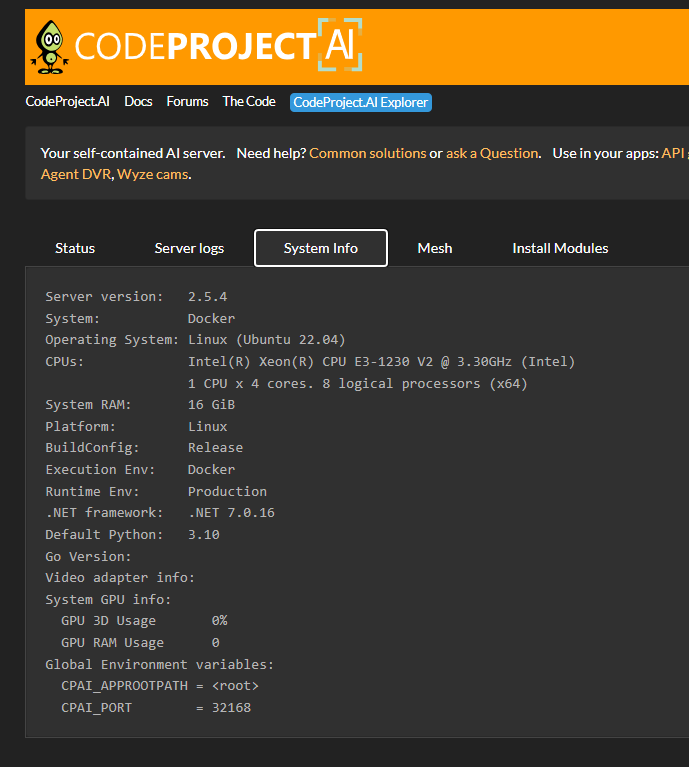

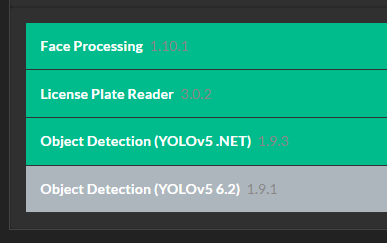

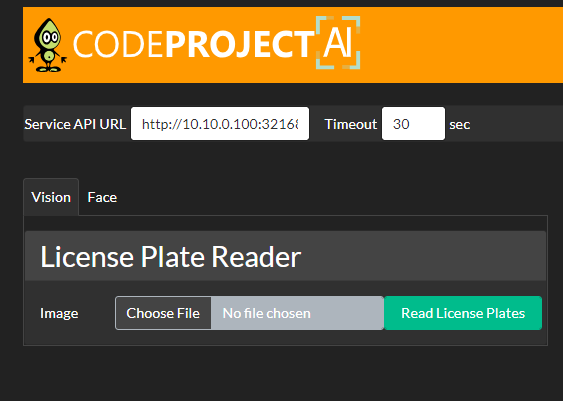

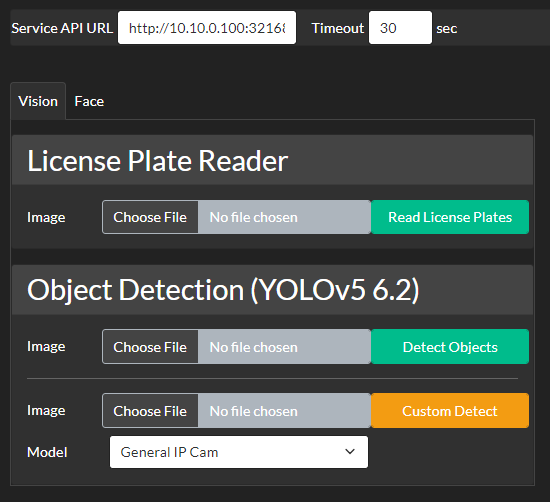

I am running CP.AI 2.5.4 in an unRaid docker container. When I go to the Explorer there is no tool for YOLOv5 .NET. It does show up when I have YOLOv5 6.2 enabled.

I've deleted and re-istalled the container but same issue persists.

|

|

|

|

|

This will be corrected in the next Docker release.

cheers

Chris Maunder

|

|

|

|

|

No worries. Thanks for the update.

|

|

|

|

|

I have CPAI on two computers. Inet and Moo. I'm using mesh. All working great.

If I open the dashboard for Inet, on Inet, and then open Moo's dashboard, on Inet, the Info selection for object detection shows the same stats on each dashboard. ie: histogram is same.

If I close one dashboard, and then refresh the other, the stats appear correct for the instance.

EDIT: Ignore this above. I didn't wait long enough to let the server update...

I am seeing that the json return for object detection shows "localhost" for the computer I am sending requests to. Even from a remote computer.

If I ping each ping endpoint the json return shows each hostname correctly.

Hope that makes sense.

modified 20-Feb-24 17:15pm.

|

|

|

|

|

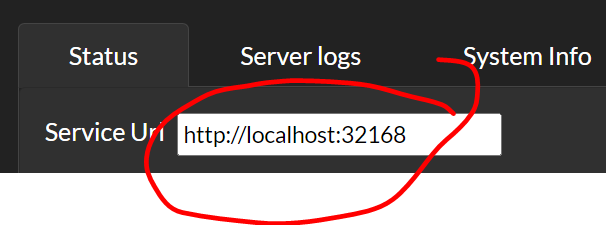

What address is in the Service URL for each dashboard?

cheers

Chris Maunder

|

|

|

|

|

All my requests are sent to CPAI REMOTE computer INET. Return shows - "processedBy":"localhost"

2/21/2024 8:11:41 AM ~ HandleAction Complete: Total Elapsed Time: 498 ms

"histogram":{"tv":3,"person":2,"vehicle":15}},"analysisRoundTripMs":102,"processedBy":"localhost"}

"statusData":{"successfulInferences":50,"failedInferences":0,"numInferences":50,"numItemsFound":20,"averageInferenceMs":120.56

"moduleName":"Object Detection (YOLOv5 6.2)","code":200,"command":"detect","executionProvider":"CUDA","canUseGPU":true

"x_max":346,"y_max":328}],"success":true,"processMs":94,"inferenceMs":93,"moduleId":"ObjectDetectionYOLOv5-6.2"

2/21/2024 8:11:41 AM ~ {"message":"Found tv","count":1,"predictions":[{"confidence":0.88525390625,"label":"tv","x_min":224,"y_min":231

2/21/2024 8:11:41 AM ~ Object Detection Result: Hawk Label: tv Confidence: 88.5%

Myapplication resides with CPAI on LOCAL computer MOO, mesh handles route. Return shows - "processedBy":"MOO"

2/21/2024 8:10:33 AM ~ HandleAction Complete: Total Elapsed Time: 361 ms

"averageInferenceMs":164.01639344262296,"histogram":{"tv":5,"vehicle":16}},"analysisRoundTripMs":102,"processedBy":"MOO"}

"statusData":{"successfulInferences":61,"failedInferences":0,"numInferences":61,"numItemsFound":21

"moduleName":"Object Detection (YOLOv5 6.2)","code":200,"command":"detect","executionProvider":"CUDA","canUseGPU":true

"x_max":346,"y_max":327}],"success":true,"processMs":83,"inferenceMs":82,"moduleId":"ObjectDetectionYOLOv5-6.2"

2/21/2024 8:10:33 AM ~ {"message":"Found tv","count":1,"predictions":[{"confidence":0.8840696811676025,"label":"tv","x_min":224,"y_min":232

2/21/2024 8:10:33 AM ~ Object Detection Result: Hawk Label: tv Confidence: 88.4%

|

|

|

|

|

OK, I see the confusion.

The 'processed by' means: was the processing done by the local computer or was it sent off to another machine in the mesh. If the local computer then it returns 'localhost' otherwise the name of the other computer. I could rename this to "local machine" or "this machine" but I'm not sure that would resolve the ambiguity. I wanted to know, at a glance, whether a given request was offloaded or locally processed, and including the name of the machine would mean mentally checking the name returned against the name of the computer I'm looking at

cheers

Chris Maunder

|

|

|

|

|

Thank you. That answers why sending to a remote computer, returns localhost. I correct this for display, from the ping endpoint return from that server.

|

|

|

|

|

Hello,

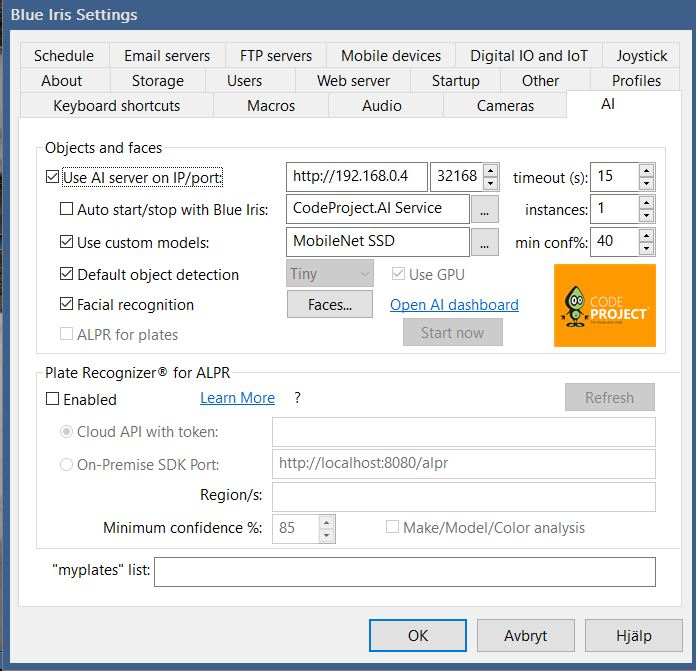

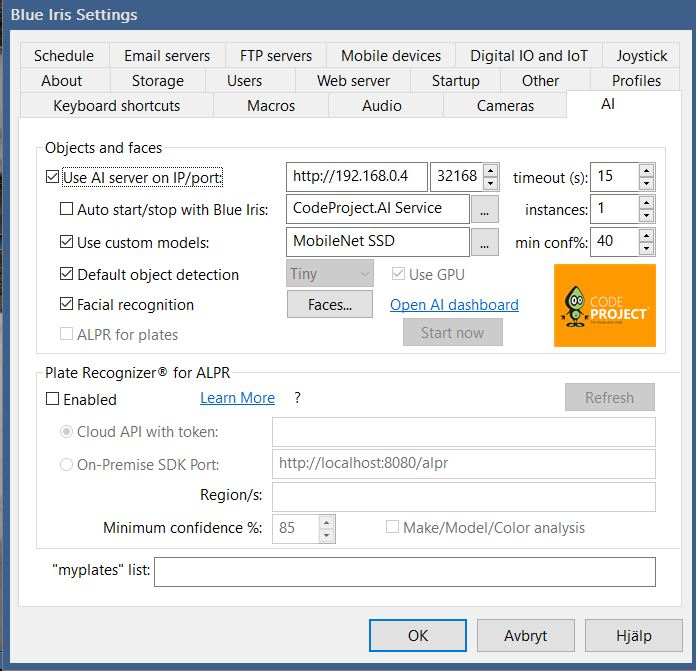

Running Blue Iris 5.8.7.5 on a NUC which utilizes a RPI 4 with Coral USB TPU located in the same network

The server is up and running and I see in the CP log that it receives requests from BI but very often I get the error in the BI log "AI: Alert canceled [AI: error 400]" and very few requests come back to BI with a classification

What can be the issue?

This is my AI tab settings in BI

|

|

|

|

|

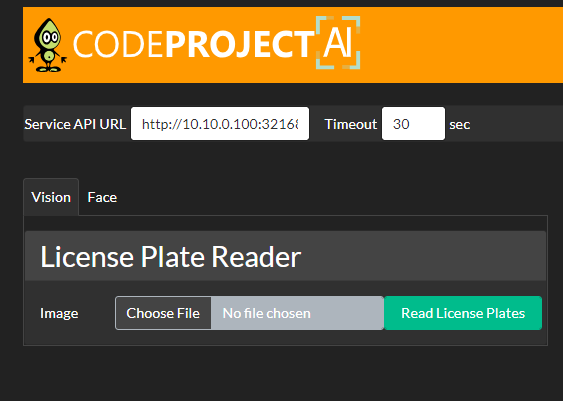

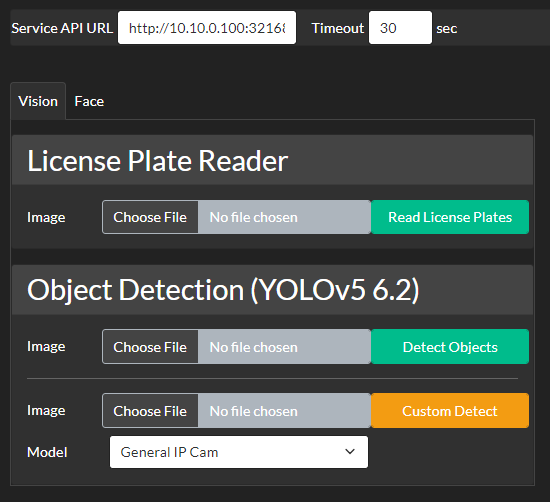

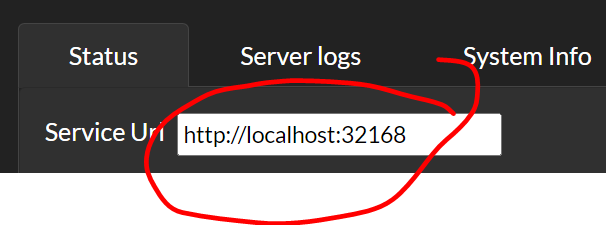

Hi, so many thanks for making this nice tool available for the community. Before I start playing with your tool, can you please tell me if I can change localhost to the name of the machine where the service will run. This is important for us as we never install a service on developer machine, we use a development server foir developers so we need 1 install on a remote server which can be accessed by different persons from their desktops.

Thanks again

|

|

|

|

|

As long as your firewall will allow it, yes, it'll work fine.

cheers

Chris Maunder

|

|

|

|

|

OK thanks, then wherev can I change localhost, is there a app.settings?

|

|

|

|

|

CodeProject.AI server will run on whatever machine you install it on, and then to access CodeProject.AI server from a different machine simply reference the server via that machine's hostname.

eg If you are on MachineA and have CodeProject.AI running on MachineB, then on MachineA you would browse to something like http://MachineB:32168 to view the dashboard (and all API calls to CodeProject.AI server would be of the form 'http://MachineB:32168/v1/route...')

cheers

Chris Maunder

|

|

|

|

|

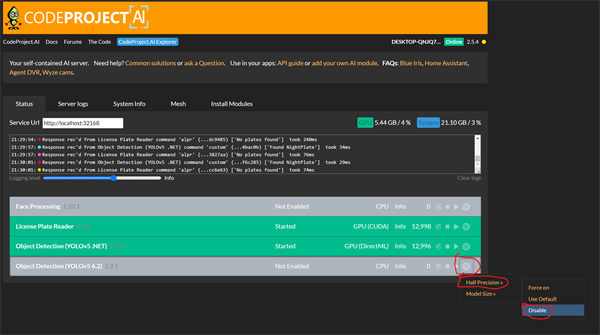

Previously I had GPU working for Object Detection for Blue Iris, after the update it won't go off CPU?

It detects CUDA/GPU in the install process, and the object detection works. I tried both Yolo 5.6.2 and experimented with Yolo 8.

My GPU is a Nvidia 1060, on Windows 10.

11:02:20:System: Windows

11:02:20:Operating System: Windows (Microsoft Windows 10.0.19045)

11:02:20:CPUs: Intel(R) Core(TM) i5-6500 CPU @ 3.20GHz (Intel)

11:02:20: 1 CPU x 4 cores. 4 logical processors (x64)

11:02:20:GPU (Primary): NVIDIA GeForce GTX 1060 6GB (6 GiB) (NVIDIA)

11:02:20: Driver: 551.23, CUDA: 12.4 (up to: 12.4), Compute: 6.1, cuDNN: 8.5

11:10:55:ObjectDetectionYOLOv5-6.2: GPU support

11:10:56:ObjectDetectionYOLOv5-6.2: CUDA Present...Yes (CUDA 12.3, cuDNN 8.5)

11:10:56:ObjectDetectionYOLOv5-6.2: ROCm Present...No

11:10:58:ObjectDetectionYOLOv5-6.2: Reading ObjectDetectionYOLOv5-6.2 settings.......Done

11:10:58:ObjectDetectionYOLOv5-6.2: Installing module Object Detection (YOLOv5 6.2) 1.9.1

I tried uninstalling and reinstalling, and manually deleting the Codeproject directory and rebooting, none of it seems to work?

|

|

|

|

|

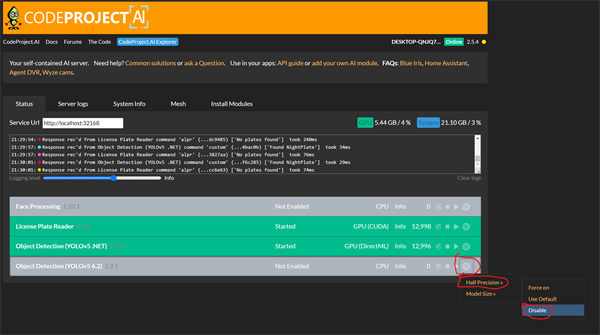

Try disabling Half Precision

|

|

|

|

|

I tried it, it noted that it's attempting to use the GPU, but fails. I noted that it attempted to use a module "torch" and failed to find it, so I'll try re-installing the module?

2:41:06:Sending shutdown request to python/ObjectDetectionYOLOv5-6.2

12:41:11:detect_adapter.py: Object Detection (YOLOv5 6.2) started.

12:41:11:detect_adapter.py: GPU compute capability is 6.1

12:41:11:detect_adapter.py: Using half-precision for the device 'NVIDIA GeForce GTX 1060 6GB'

12:41:11:detect_adapter.py: Inference processing will occur on device 'NVIDIA GeForce GTX 1060 6GB'

12:57:33:detect_adapter.py: Traceback (most recent call last):

12:57:33:detect_adapter.py: File "C:\Program Files\CodeProject\AI\modules\ObjectDetectionYOLOv5-6.2\detect_adapter.py", line 20, in

12:57:33:detect_adapter.py: from detect import do_detection

12:57:33:detect_adapter.py: File "C:\Program Files\CodeProject\AI\modules\ObjectDetectionYOLOv5-6.2\detect.py", line 7, in

12:57:33:detect_adapter.py: import torch

12:57:33:detect_adapter.py: ModuleNotFoundError: No module named 'torch'

modified 19-Feb-24 21:59pm.

|

|

|

|

|

Looks like the module install didn't complete. Can you please uninstall then reinstall the Object detection YOLO 6.2 module via the modules tab in the dashboard?

cheers

Chris Maunder

|

|

|

|

|

I uninstalled and reinstalled and it didn't work, it said it checked and found the torch files.

I moved everything "torch" related out of the directory the error refers to, and reinstalled to try force it to re-download, and it didn't use the GPU, nor download anything.

But when I copied that data back, it began working, its saying its working on the GPU, is there any way to check?

I know that was quite an inelegant and possibly break-stuff process I did, but I was intending to completely reinstalled Codeproject again after that, and was surprisd when it began working.

|

|

|

|

|

Try using the Object Detection (YOLOv5 .NET) module. This module will also use your Nvidia GPU, it might be even fast then the other modules.

|

|

|

|

|

Gave it a go and it worked on GPU just fine, but it was slower at 60ms where 5.6.2 was more constantly 40ms.

|

|

|

|

|

I'm also struggling to get the GPU mode to show active since 2.5.4, tried a uninstall and manual clean of the folders, reinstalled CUDA 11.8 and CUDnn, disabled half precision, different model sizes all to no avail

I'm using a GTX 1650, not seeing any errors in the installation or startup it just doesn't want to go into GPU mode.

|

|

|

|

|

I run CP on an RPI5 using Docker and Coral USB version.

I really want to use @MikeLud GitHub - MikeLud/CodeProject.AI-Custom-IPcam-Models[^].

A while back I got info that Mike was training the model using Yolo8 model and files do exist on his Github, but they dont show in CP Install Modules section.

Can someone tell me if and how I can add the model manually, what files are needed and where should I store them, remember I am using docker. Any other configurations changes needed for such an install ?

Thanks in advance.

|

|

|

|

|

|

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin