|

Can you please try uninstalling and reinstalling the Coral module? We've applied a fix (we hope).

Thanks,

Sean Ewington

CodeProject

modified 14-Mar-24 12:17pm.

|

|

|

|

|

Have the exact same problem. Goes down multiple times a day. The Docker container should be catching this and restarting itself, but it's not.

|

|

|

|

|

So far the latest update fixed the issue for me

|

|

|

|

|

Hasn't for me, but I've heard they're working on an update to auto-restart when it goes down, so that's cool

|

|

|

|

|

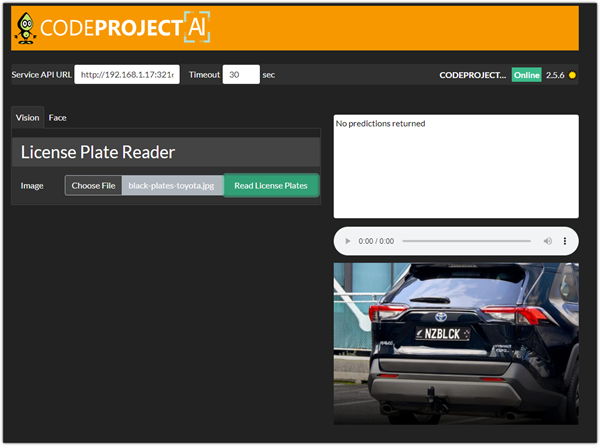

Successfully installed LPR 3.0.2 with no errors however no plates are detected when queries are passed to CPAI from Agent.

Loading CPAI Explorer and processing images of license plates returns no predictions.

I have face detection also running on this VM with no issues.

Server version: 2.5.6

System: Windows

Operating System: Windows (Microsoft Windows 10.0.19045)

CPUs: AMD FX(tm)-6300 Six-Core Processor (AMD)

1 CPU x 2 cores. 2 logical processors (x64)

GPU (Primary): VirtualBox Graphics Adapter (WDDM) (Oracle Corporation)

Driver: 6.0.18.0

System RAM: 4 GiB

Platform: Windows

BuildConfig: Release

Execution Env: Native

Runtime Env: Production

Runtimes installed:

.NET runtime: 7.0.10

.NET SDK: Not found

Default Python: Not found

Go: Not found

NodeJS: Not found

Video adapter info:

VirtualBox Graphics Adapter (WDDM):

Driver Version 6.0.18.0

Video Processor VirtualBox VESA BIOS

System GPU info:

GPU 3D Usage 0%

GPU RAM Usage 0

Global Environment variables:

CPAI_APPROOTPATH = <root>

CPAI_PORT = 32168

19:54:11:Module ALPR installed successfully.

19:54:11:

19:54:11:Module 'License Plate Reader' 3.0.2 (ID: ALPR)

19:54:11:Valid: True

19:54:11:Module Path: <root>\modules\ALPR

19:54:11:AutoStart: True

19:54:11:Queue: alpr_queue

19:54:11:Runtime: python3.9

19:54:11:Runtime Loc: Local

19:54:11:FilePath: ALPR_adapter.py

19:54:11:Pre installed: False

19:54:11:Start pause: 3 sec

19:54:11:Parallelism: 0

19:54:11:LogVerbosity:

19:54:11:Platforms: all

19:54:11:GPU Libraries: installed if available

19:54:11:GPU Enabled: enabled

19:54:11:Accelerator:

19:54:11:Half Precis.: enable

19:54:11:Environment Variables

19:54:11:AUTO_PLATE_ROTATE = True

19:54:11:MIN_COMPUTE_CAPABILITY = 6

19:54:11:MIN_CUDNN_VERSION = 7

19:54:11:OCR_OPTIMAL_CHARACTER_HEIGHT = 60

19:54:11:OCR_OPTIMAL_CHARACTER_WIDTH = 30

19:54:11:OCR_OPTIMIZATION = True

19:54:11:PLATE_CONFIDENCE = 0.7

19:54:11:PLATE_RESCALE_FACTOR = 2

19:54:11:PLATE_ROTATE_DEG = 0

19:54:11:

19:54:11:Started License Plate Reader module

19:54:11:Installer exited with code 0

19:54:15:Module ALPR started successfully.

modified 7-Mar-24 10:23am.

|

|

|

|

|

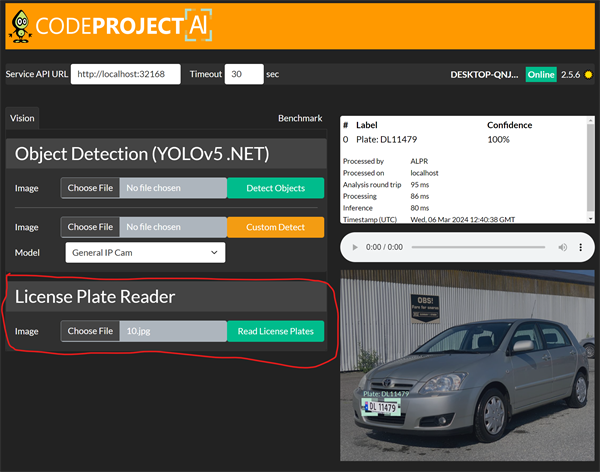

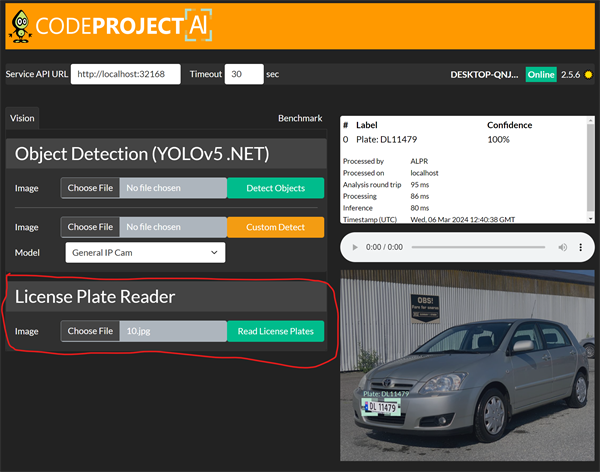

Does it work if you run a test image like the below

|

|

|

|

|

No it doesn't detect anything

|

|

|

|

|

Are you seeing any errors in the log? Also what Object Detection module are you using.

|

|

|

|

|

When I upload an image and select read license plates the log returns a single line of:

16:33:59:Response rec'd from License Plate Reader command 'alpr' (...d722a0)

|

|

|

|

|

Are you running Object Detection (YOLOv5 6.2) or Object Detection (YOLOv5 .NET) module? Because the LPR module needs one of these modules to work.

|

|

|

|

|

Ah, now that would make sense! I did not have any object detection running, thought I was fine as I had already had face detection successfully running on the same host.

Have started the .NET module and now license plate detection is working. Apologies for bothering you.

|

|

|

|

|

|

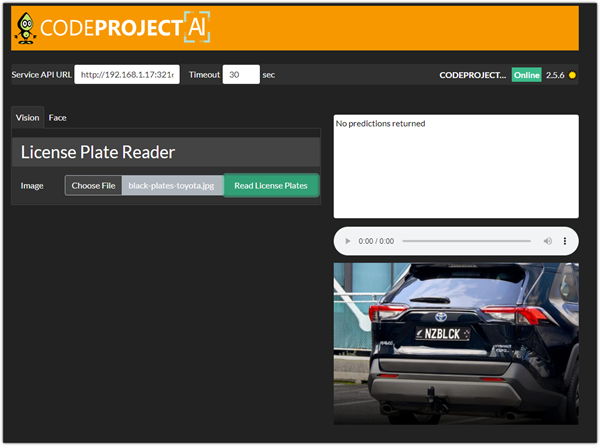

I have the Yolo V5 6.2 running and it works perfect with BI.

But LPR doesn't work. I tested several times now. Always "No Predictions Returned". Yolo V5 6.2 returns "DayPlate found" and marks it correctly if I run it with the license-plate model.

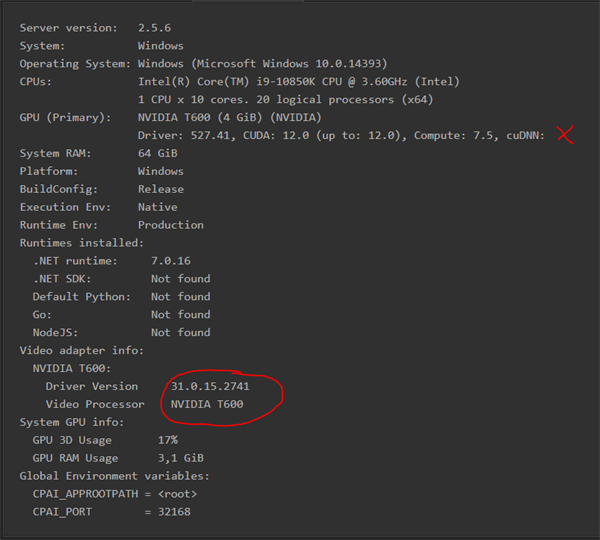

During installation I found an error because of CUDNN. CUDA 12.2 is installed and Yolo V5 6.2 is running in GPU mode.

Is it mandatory for LPR to install CUDNN? I skipped it because "Face Processing" and "Yolo V5 6.2" didn't need it.

|

|

|

|

|

It is mandatory to have cuDNN installed also depending on the age of your GPU CUDA 12.2 will not work and needs CUDA 11.8

modified 7-Mar-24 9:08am.

|

|

|

|

|

Currently I'm using "platerecognizer". But BI doesn't deal with the results since a couple of months now. The only result is "DayPlate" but although the text is returned correctly, it fails to cancel the alerts for my own car. In a former version this worked too.

Now I want to test this CPAI solution which is much better, especially because I don't like to send such data into cloud storages.

I have cuDNN installer downloaded. Will test it later.

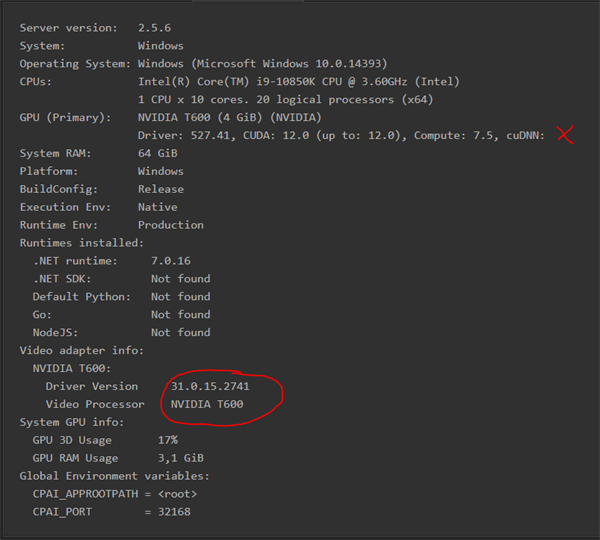

Here is the system info because of the GPU:

|

|

|

|

|

With your GPU I recommend using CUDA 11.8 not CUDA 12.0

|

|

|

|

|

I got it to run with cuDNN installation. But all sample plates were read with wrong results.

Unfortunately currently not reliable to detect at least German license plates with my 5 MP cameras.

"platerecognizer.com" can read it, but here BI fails with "cancel alert" rule as mentioned above. Can't verify if LPR would work if the results of test images aren't correct.

|

|

|

|

|

Try uninstalling the License Plate Reader module then reinstall.

|

|

|

|

|

The installation was ok, any errors in log about cuDNN or CUDA. It worked, but the results were wrong. "Badges" from admission office, "blanks" and "-" are detected as 8, ...

And the samples are really good from my 5 MP cameras. I also enabled OCR improvement, no changes.

It seems not to work with German license plates.

Send you PM with samples.

|

|

|

|

|

Got a new error message in latest version, one I don't recall seeing before. Not crashing level of problem but thought I would share...

08:19:37:Object Detection (YOLOv5 6.2): [RuntimeError] : Traceback (most recent call last):

File "C:\Program Files\CodeProject\AI\modules\ObjectDetectionYOLOv5-6.2\detect.py", line 141, in do_detection

det = detector(img, size=640)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\torch\nn\modules\module.py", line 1190, in _call_impl

return forward_call(*input, **kwargs)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\torch\autograd\grad_mode.py", line 27, in decorate_context

return func(*args, **kwargs)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\yolov5\models\common.py", line 705, in forward

y = self.model(x, augment=augment) # forward

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\torch\nn\modules\module.py", line 1190, in _call_impl

return forward_call(*input, **kwargs)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\yolov5\models\common.py", line 515, in forward

y = self.model(im, augment=augment, visualize=visualize) if augment or visualize else self.model(im)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\torch\nn\modules\module.py", line 1190, in _call_impl

return forward_call(*input, **kwargs)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\Lib\site-packages\yolov5\models\yolo.py", line 209, in forward

return self._forward_once(x, profile, visualize) # single-scale inference, train

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\Lib\site-packages\yolov5\models\yolo.py", line 121, in _forward_once

x = m(x) # run

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\torch\nn\modules\module.py", line 1190, in _call_impl

return forward_call(*input, **kwargs)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\Lib\site-packages\yolov5\models\yolo.py", line 75, in forward

wh = (wh * 2) ** 2 * self.anchor_grid[i] # wh

RuntimeError: The size of tensor a (60) must match the size of tensor b (56) at non-singleton dimension 2

modified 5-Mar-24 11:42am.

|

|

|

|

|

Thanks very much for your report. Could you please share your System Info tab from your CodeProject.AI Server dashboard?

Thanks,

Sean Ewington

CodeProject

|

|

|

|

|

What size model are you using, and how much RAM is your GPU using? (I'm wondering if this is a memory issue)

cheers

Chris Maunder

|

|

|

|

|

Hello,

i am running a docker instance of CPAI. The plain docker container containes OCoral 2.1.3. The update works perfect, but after a reboot of the container the version switched back to 2.1.3. Does anybody else have that issue?

Server version: 2.5.4

System: Docker

Operating System: Linux (Ubuntu 22.04)

CPUs: 1 CPU. (Arm64)

System RAM: 8 GiB

Platform: RaspberryPi

BuildConfig: Release

Execution Env: Docker

Runtime Env: Production

.NET framework: .NET 7.0.16

Default Python: 3.10

Go Version:

Video adapter info:

System GPU info:

GPU 3D Usage 0%

GPU RAM Usage 0

Global Environment variables:

CPAI_APPROOTPATH = <root>

CPAI_PORT = 32168

My stack file:

version: "3.8"

services:

ai-server:

image: codeproject/ai-server:rpi64-2.5.4

container_name: CodeprojectAI

privileged: true

ports:

- "32168:32168"

volumes:

- /dev/bus/usb:/dev/bus/usb

- /data/compose/codeprojectai/data:/app/data

- /data/compose/codeprojectai/ai:/etc/codeproject/ai

- /data/compose/codeprojectai/modules:/app/modules

Thanks

Tbs

modified 6-Mar-24 11:09am.

|

|

|

|

|

Thanks for the report. Does this happen every time you reboot the container? Does it happen to other modules?

Thanks,

Sean Ewington

CodeProject

|

|

|

|

|

Hello Sean,

it happens after each reboot - I didn't saw this behaviour to other modules so far.

|

|

|

|

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin