Intel RealSense D415/435: Coordinate Mapping in C#

During the past few months, I have been heavily experimenting with the Intel RealSense D415 & D435 depth camera. Today, I am going to show you how to easily transform between different coordinate systems. RealSense D415/435 is a low-cost device....

During the past few months, I have been heavily experimenting with the Intel RealSense D415 & D435 depth camera. Today, I am going to show you how to easily transform between different coordinate systems. RealSense D415/435 is a low-cost device that can enhance your applications with 3D perception. Its technical specs are displayed below:

| Use Environment | Indoor/Outdoor |

|---|---|

| Depth Technology | Active IR Stereo (Global Shutter) |

Main Intel® RealSense component component | Intel® RealSense Vision Processor D4 Vision Processor D4Intel® RealSense  module D430 module D430 |

| Depth FOV (Horizontal × Vertical × Diagonal) | 86 x 57 x 94 (+/- 3°) |

| Depth Stream Output Resolution | Up to 1280 x 720 |

| Depth Stream Output Frame Rate | Up to 90 fps |

| Minimum Depth Distance (Min-Z) | 0.2m |

| Sensor Shutter Type | Global shutter |

| Maximum Range | ~10.0m |

| RGB Sensor Resolution and Frame Rate | 1920 x 1080 at 30 fps |

| RGB Sensor FOV (Horizontal x Vertical x Diagonal) | 69.4° x 42.5° x 77° (+/- 3°) |

| Camera Dimension (Length x Depth x Height) | 90 mm x 25 mm x 25 mm |

| Connectors | USB 3.0 Type – C |

| Mounting Mechanism | One 1/4-20 UNC thread mounting point Two M3 thread mounting points |

What is Coordinate Mapping?

One of the most common tasks when using depth cameras is mapping 3D world-space coordinates to 2D screen-space coordinates (and vice-versa).

The coordinate system in the 3D world-space is measured in meters. So, a 3D point is represented as a set of X, Y, and Z coordinates, which refer to the horizontal, vertical, and depth axis respectively. The origin point (0, 0, 0) of the 3D coordinate system is the position of the camera.

The coordinate system in the 2D screen-space is measured in pixels. A 2D point is represented as a set of X and Y coordinates, which refer to the positions of the leftmost and topmost point of the frame, respectively. The origin point (0, 0) of the Cartesian system is the top-left edge of the frame. RealSense consists of an RGB and a Depth sensor. This means there will be two discrete frame types generated from each sensor. For now, we’ll only focus on the Depth frames.

Coordinate mapping is the process of converting between the 3D and the 2D system.

Such transformations require knowledge of the internal configuration of the camera (intrinsic and extrinsic parameters). The intrinsic and extrinsic parameters specify properties such as the distortion of the lens, the focal point, the image format, the rotation matrix, etc. As a result, the required Mathematical transformations are usually performed internally by the SDK. Indeed, Intel has exposed the transformations as part of their C++ and Python frameworks.

If you’ve been following this blog, you know that I am building most of my commercial applications in C# and Unity3D. So, it came out as a big surprise to see that the coordinate mapping functionality was not part of the official C# framework. This is why I decided to port the original C++ projection & deprojection code to C#.

Thankfully, the C# API already includes an Intrinsics struct. The Intrinsics struct is providing us with the raw camera intrinsic parameters.

Background (lenses, distortion, photography)

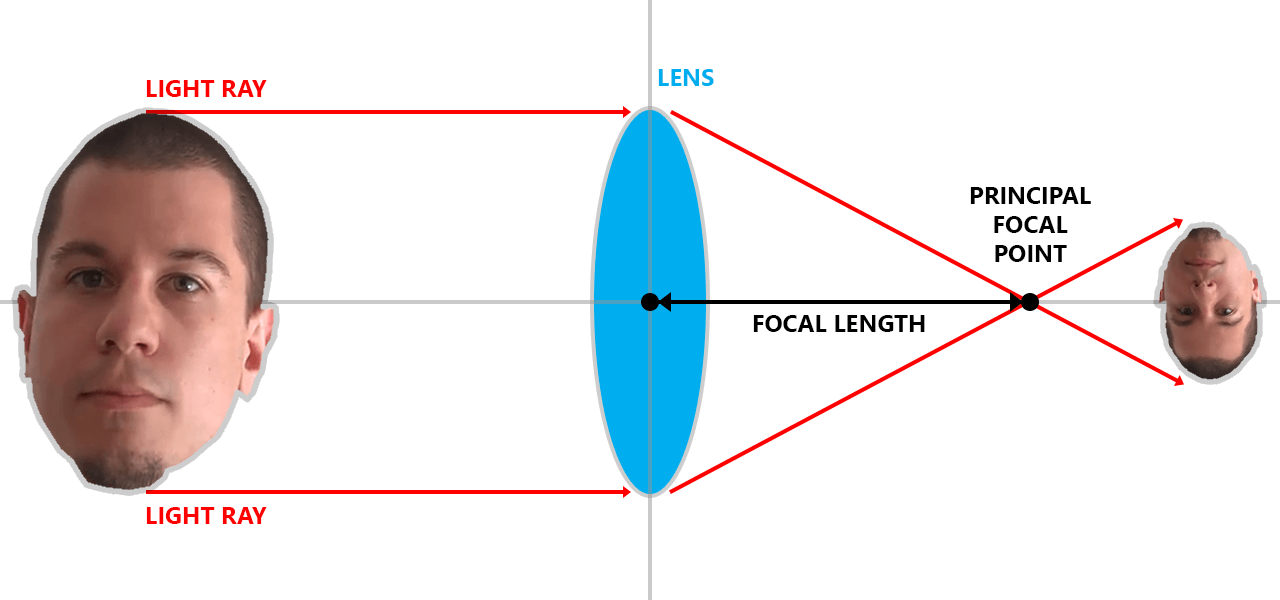

Keep in mind that we’ll be using some terms that are common in photography and photogrammetry. The RealSense intrinsic parameters are the following:

Width/Height

The width and height parameters are the dimensions of the camera image, measured in pixels.

Principal Point

You can think of the principal point as the point in the camera where the parallel light rays are focusing. The coordinates of the principal point are stored in the ppx and ppy values.

Focal Length

Focal length is the distance from the center of the lens to the principal point. It is represented with the fx and fy parameters.

The following illustration will help you understand the concept visually:

Distortion model

Lenses do not see the world as a perfect rectangular frame. Instead, the frame is deviating from its rectilinear projection. The deviation is what causes those fish-eye effects. Distortion can be “corrected” if we know its coefficients. The correction is using the Brown-Conrady transformations.

Distortion Coefficients

The distortion coefficients are values which describe the distortion in Mathematical terms and are stored in the coeffs matrix.

If all this seems too hard to understand, that’s because it is. Don’t worry, though. I promise I’ll do the Math for you

Prerequisites

To test the source code, you’ll need a RealSense camera, as well as the official RealSense SDK for C# or Unity.

- RealSense D435 camera or RealSense D415 camera

- RealSense SDK

- RealSense Unity package

- RealSense Source code

The recommended development environment is Visual Studio 2017 and Unity3D.

Mapping 3D to 2D

First, we are going to convert 3D world coordinates to 2D camera coordinates. To encapsulate the 3D coordinates, I’ve created a simple Vector3D struct (X, Y, Z floating-point values). To represent the 2D coordinates, I’ve created a Vector2D struct (X, Y floating-point values).

public struct Vector3D

{

public float X;

public float Y;

public float Z;

}

public struct Vector2D

{

public float X;

public float Y;

}

Obviously, you are free to use the native .NET or Unity vector structures if you want to.

The following method is extending the Intrinsics class. It’s taking a 3D point as an input and it’s outputting the corresponding 2D coordinates. In case the transformations fail for any reason, the (0,0) point is returned.

public static Vector2D Map3DTo2D(this Intrinsics intrinsics, Vector3D point)

{

Vector2D pixel = new Vector2D();

float x = point.X / point.Z;

float y = point.Y / point.Z;

if (intrinsics.model == Distortion.ModifiedBrownConrady)

{

float r2 = x * x + y * y;

float f = 1f + intrinsics.coeffs[0] * r2 + intrinsics.coeffs[1] * r2 * r2 + intrinsics.coeffs[4] * r2 * r2 * r2;

x *= f;

y *= f;

float dx = x + 2f * intrinsics.coeffs[2] * x * y + intrinsics.coeffs[3] * (r2 + 2 * x * x);

float dy = y + 2f * intrinsics.coeffs[3] * x * y + intrinsics.coeffs[2] * (r2 + 2 * y * y);

x = dx;

y = dy;

}

if (intrinsics.model == Distortion.Ftheta)

{

float r = (float)Math.Sqrt(x * x + y * y);

float rd = (1f / intrinsics.coeffs[0] * (float)Math.Atan(2f * r * (float)Math.Tan(intrinsics.coeffs[0] / 2f)));

x *= rd / r;

y *= rd / r;

}

pixel.X = x * intrinsics.fx + intrinsics.ppx;

pixel.Y = y * intrinsics.fy + intrinsics.ppy;

return pixel;

}

Mapping 2D to 3D

Now, we are going to do the opposite: map a 2D point to the 3D space. To do so, we need to know a couple of things:

- The X and Y of the 2D point, and

- The depth of the 2D point

The depth of the desired point can be found by calling the GetDistance() method of the DepthFrame class.

float depth = depthFrame.GetDistance(x, y);

Given these inputs, the following method will transform the 2D screen coordinates to 3D world coordinates:

public static Vector3D Map2DTo3D(this Intrinsics intrinsics, Vector2D pixel, float depth)

{

Vector3D point = new Vector3D();

float x = (pixel.X - intrinsics.ppx) / intrinsics.fx;

float y = (pixel.Y - intrinsics.ppy) / intrinsics.fy;

if (intrinsics.model == Distortion.InverseBrownConrady)

{

float r2 = x * x + y * y;

float f = 1 + intrinsics.coeffs[0] * r2 + intrinsics.coeffs[1] * r2 * r2 + intrinsics.coeffs[4] * r2 * r2 * r2;

float ux = x * f + 2 * intrinsics.coeffs[2] * x * y + intrinsics.coeffs[3] * (r2 + 2 * x * x);

float uy = y * f + 2 * intrinsics.coeffs[3] * x * y + intrinsics.coeffs[2] * (r2 + 2 * y * y);

x = ux;

y = uy;

}

point.X = depth * x;

point.Y = depth * y;

point.Z = depth;

return point;

}

Mapping 2D color to depth

Finally, there is another coordinate mapping scenario: converting between 2D color coordinates and 2D depth coordinates. The C# SDK is providing the Align class for this purpose, so you can refer to the official documentation on usage instructions.

Summary

This is it, folks! For your reference, here is the complete source code:

using Intel.RealSense;

using System;

namespace Intel.RealSense.Extensions

{

/// <summary>

/// Converts between 2D and 3D RealSense coordinates.

/// </summary>

public static class CoordinateMapper

{

/// <summary>

/// Maps the specified 3D point to the 2D space.

/// </summary>

/// <param name="intrinsics">The camera intrinsics to use.</param>

/// <param name="point">The 3D point to map.</param>

/// <returns>The corresponding 2D point.</returns>

public static Vector2D Map3DTo2D(this Intrinsics intrinsics, Vector3D point)

{

Vector2D pixel = new Vector2D();

float x = point.X / point.Z;

float y = point.Y / point.Z;

if (intrinsics.model == Distortion.ModifiedBrownConrady)

{

float r2 = x * x + y * y;

float f = 1f + intrinsics.coeffs[0] * r2 + intrinsics.coeffs[1] * r2 * r2 + intrinsics.coeffs[4] * r2 * r2 * r2;

x *= f;

y *= f;

float dx = x + 2f * intrinsics.coeffs[2] * x * y + intrinsics.coeffs[3] * (r2 + 2 * x * x);

float dy = y + 2f * intrinsics.coeffs[3] * x * y + intrinsics.coeffs[2] * (r2 + 2 * y * y);

x = dx;

y = dy;

}

if (intrinsics.model == Distortion.Ftheta)

{

float r = (float)Math.Sqrt(x * x + y * y);

float rd = (1f / intrinsics.coeffs[0] * (float)Math.Atan(2f * r * (float)Math.Tan(intrinsics.coeffs[0] / 2f)));

x *= rd / r;

y *= rd / r;

}

pixel.X = x * intrinsics.fx + intrinsics.ppx;

pixel.Y = y * intrinsics.fy + intrinsics.ppy;

return pixel;

}

/// <summary>

/// Maps the specified 2D point to the 3D space.

/// </summary>

/// <param name="intrinsics">The camera intrinsics to use.</param>

/// <param name="pixel">The 2D point to map.</param>

/// <param name="depth">The depth of the 2D point to map.</param>

/// <returns>The corresponding 3D point.</returns>

public static Vector3D Map2DTo3D(this Intrinsics intrinsics, Vector2D pixel, float depth)

{

Vector3D point = new Vector3D();

float x = (pixel.X - intrinsics.ppx) / intrinsics.fx;

float y = (pixel.Y - intrinsics.ppy) / intrinsics.fy;

if (intrinsics.model == Distortion.InverseBrownConrady)

{

float r2 = x * x + y * y;

float f = 1 + intrinsics.coeffs[0] * r2 + intrinsics.coeffs[1] * r2 * r2 + intrinsics.coeffs[4] * r2 * r2 * r2;

float ux = x * f + 2 * intrinsics.coeffs[2] * x * y + intrinsics.coeffs[3] * (r2 + 2 * x * x);

float uy = y * f + 2 * intrinsics.coeffs[3] * x * y + intrinsics.coeffs[2] * (r2 + 2 * y * y);

x = ux;

y = uy;

}

point.X = depth * x;

point.Y = depth * y;

point.Z = depth;

return point;

}

}

/// <summary>

/// Represensts a 2D vector/point.

/// </summary>

public struct Vector2D

{

public float X;

public float Y;

}

/// <summary>

/// Represensts a 3D vector/point.

/// </summary>

public struct Vector3D

{

public float X;

public float Y;

public float Z;

}

}

There you have it. You can now use the full power of RealSense in your C# and Unity applications!

‘Til the next time… keep coding!

The post Intel RealSense D415/435: Coordinate Mapping in C# appeared first on Vangos Pterneas.