|

Sean, I have been trying the Ubuntu Deb CPAI application for about a week and a half. I have installed and uninstalled *5* times. Progressively, I found that each step of install and modification needs to be done as root, not sudo. Even with sudo, I was seeing file or directory not found. That's what led me to try root, then everything was found and run. Running the install at the command line allows one to watch for errors. As opposed to using the software installer. I do have .Net 7.0 installed. The installation has no problems with that.

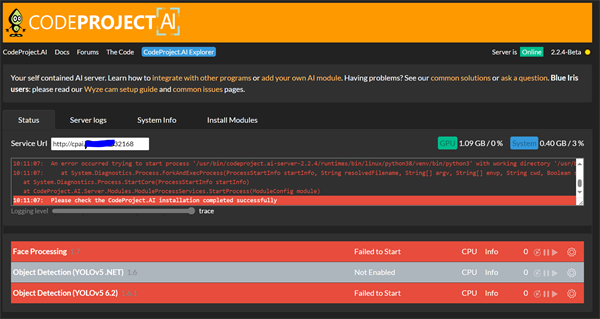

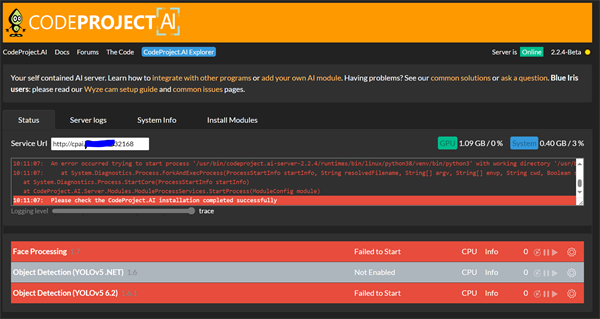

I wind up with the web UI starting, server shows running, but I get a big red banner for each module reading "Failed to start". This is after running ../../setup.sh from each module directory in an attempt to repair it. To be honest, I've given up on the Ubuntu application. 5 times is enough. I have solved the Coral USB accelerator issue in Docker, so I am using that now. I also have Deepstack container that just needs restart to take over if necessary. I was using that for three weeks until I fixed the Coral on Docker issue.

|

|

|

|

|

I have the same experience as Steve. I did just have a go at running in root rather than as sudo but its the same result as Steve has described.

|

|

|

|

|

Hey Steve,

Can you please describe the system you're on? I tested and built the Ubuntu deb package on plain vanilla Ubuntu 22.04 out of the box. I'm running it off a USB SSD, but apart from that nothing exciting at all (on purpose). If I can understand better what roadblock we have I can either try to work around it or include whatever extra steps are necessary in the box.

cheers

Chris Maunder

|

|

|

|

|

Chris;

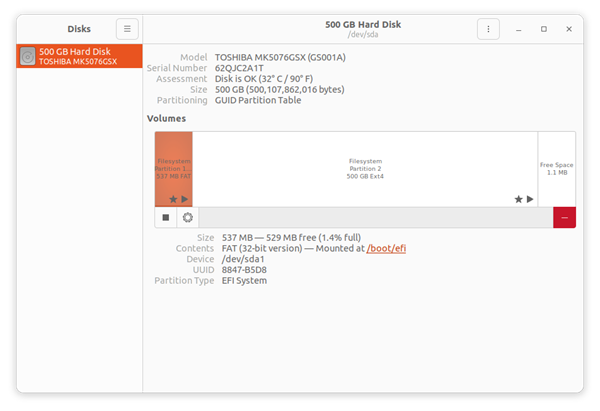

Just Ubuntu, 22.04 on an HP intel computer.

I did nothing special during the installation, just followed the prompts.

I did select the base install however, not the desktop, but server. Not all the games, Office etc.

Distributor ID: Ubuntu

Description: Ubuntu 22.04.3 LTS

Release: 22.04

Codename: jammy

H/W path Device Class Description

========================================================

system HP EliteDesk 800 G2 DM 65W (W2R53UC#ABA)

/0 bus 8056

/0/0 memory 128KiB L1 cache

/0/1 memory 128KiB L1 cache

/0/2 memory 1MiB L2 cache

/0/3 memory 6MiB L3 cache

/0/4 processor Intel(R) Core(TM) i5-6500 CPU @ 3.20GHz

/0/5 memory 8GiB System Memory

/0/5/0 memory 8GiB SODIMM DDR4 Synchronous Unbuffered (Unregistered) 2133 MHz (0.5 ns)

/0/5/1 memory [empty]

/0/b memory 64KiB BIOS

/0/100 bridge Xeon E3-1200 v5/E3-1500 v5/6th Gen Core Processor Host Bridge/DRAM Registers

/0/100/2 /dev/fb0 display HD Graphics 530

/0/100/14 bus 100 Series/C230 Series Chipset Family USB 3.0 xHCI Controller

/0/100/14/0 usb1 bus xHCI Host Controller

/0/100/14/0/5 bus USB 2.0 Hub

/0/100/14/0/5/2 printer HP LaserJet 400 MFP M425dn

/0/100/14/0/5/3 input30 input SONiX USB DEVICE

/0/100/14/0/5/4 input34 input PixArt USB Optical Mouse

/0/100/14/1 usb2 bus xHCI Host Controller

/0/100/14.2 generic 100 Series/C230 Series Chipset Family Thermal Subsystem

/0/100/16 communication 100 Series/C230 Series Chipset Family MEI Controller #1

/0/100/16.3 communication 100 Series/C230 Series Chipset Family KT Redirection

/0/100/17 scsi0 storage Q170/Q150/B150/H170/H110/Z170/CM236 Chipset SATA Controller [AHCI Mode]

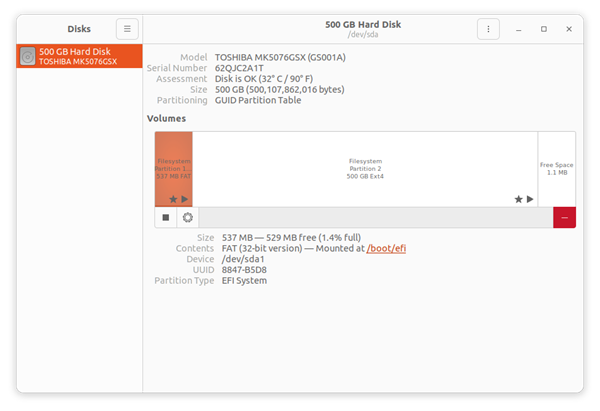

/0/100/17/0.0.0 /dev/sda disk 500GB TOSHIBA MK5076GS

/0/100/17/0.0.0/1 volume 511MiB Windows FAT volume

/0/100/17/0.0.0/2 /dev/sda2 volume 465GiB EXT4 volume

/0/100/1f bridge Q170 Chipset LPC/eSPI Controller

|

|

|

|

|

I'm running a ubuntu server virtual machine on a proxmox server. I also have passed through the nvidia GPU. ill spin up a ubuntu desktop instance and give it a try to see if that changes anything and report back.

|

|

|

|

|

Have you tried running the bash ../../setup.sh as root or under sudo? Any changes?

cheers

Chris Maunder

|

|

|

|

|

Yes. The last time I did the install, I did everything except running the start.sh as root.

I did dpkg -i as root, then ../setup.sh as root also for the modules.

I did this by doing sudo -i to change to the root user. That was the only way I got around the directory/file not found errors I was seeing in the install.

I might try it again, and try to more carefully document what is happening during the install.

Initially I did:

Open home/downloads

right click on codeproject.ai-server_2.2.4_Ubuntu_x64.deb

Open with other application

Software Install Select

Click Install

Enter password

Click authenticate

Preparing

Open terminal in /usr/bin/codeproject.ai-server-2.2.4

./start.sh

Firefox opens at localhost:32168

Installing Face Processing

Gtk+ 2.x symbols detected. Using Gtk+ 2.x and Gtk+ 3 in the same process is not supported.

Failed to load module "canberra-gtk-module"

Installing Face Processing

15 minutes in

Will continue

Changing Blue Iris to deepstack

Deepstack running ipcam-combined

Waiting for Face Processing to install 25 minutes so far.

I'll continue if anything interesting happens.

|

|

|

|

|

OK, I guess that's not working.

One hour 15 minutes. Shows server offline. Still shows Installing Face Processing.

Trace ModuleRunner Start

Trace Starting Background AI Modules

Infor ** Setting up initial modules. Please be patient...

Infor ** Installing initial module FaceProcessing.

Infor Preparing to install module 'FaceProcessing'

Gtk-Message: 12:30:28.893: Not loading module "atk-bridge": The functionality is provided by GTK natively. Please try to not load it.

(firefox:820325): Gtk-WARNING **: 12:30:28.961: GTK+ module /snap/firefox/3252/gnome-platform/usr/lib/gtk-2.0/modules/libcanberra-gtk-module.so cannot be loaded.

GTK+ 2.x symbols detected. Using GTK+ 2.x and GTK+ 3 in the same process is not supported.

Gtk-Message: 12:30:28.961: Failed to load module "canberra-gtk-module"

(firefox:820325): Gtk-WARNING **: 12:30:28.963: GTK+ module /snap/firefox/3252/gnome-platform/usr/lib/gtk-2.0/modules/libcanberra-gtk-module.so cannot be loaded.

GTK+ 2.x symbols detected. Using GTK+ 2.x and GTK+ 3 in the same process is not supported.

Gtk-Message: 12:30:28.963: Failed to load module "canberra-gtk-module"

Infor Downloading module 'FaceProcessing'

Infor Installing module 'FaceProcessing'

Debug Installer script at '/usr/bin/codeproject.ai-server-2.2.4/setup.sh'

Infor FaceProcessing: Installing CodeProject.AI Analysis Module

Infor FaceProcessing: ======================================================================

Infor FaceProcessing: CodeProject.AI Installer

Infor FaceProcessing: ======================================================================

Infor FaceProcessing: 270.05 GiB available

Infor FaceProcessing: Checking GPU support

Infor FaceProcessing: CUDA Present...No

Infor FaceProcessing: Allowing GPU Support: Yes

Infor FaceProcessing: Allowing CUDA Support: Yes

Infor FaceProcessing: General CodeProject.AI setup

[sudo] password for steve: Debug Current Version is 2.2.4-Beta

Infor Server: This is the latest version

ATTENTION: default value of option mesa_glthread overridden by environment.

ATTENTION: default value of option mesa_glthread overridden by environment.

Unhandled exception. System.ObjectDisposedException: Cannot write to a closed TextWriter.

Object name: 'StreamWriter'.

at System.IO.StreamWriter.<throwifdisposed>g__ThrowObjectDisposedException|76_0()

at System.IO.StreamWriter.WriteLine(String value)

at CodeProject.AI.Server.Modules.ModuleInstaller.SendOutputToLog(TextWriter log, Object sender, DataReceivedEventArgs e)

at CodeProject.AI.Server.Modules.ModuleInstaller.<>c__DisplayClass20_0.<installmoduleasync>b__0(Object s, DataReceivedEventArgs e)

at System.Diagnostics.AsyncStreamReader.FlushMessageQueue(Boolean rethrowInNewThread)

--- End of stack trace from previous location ---

at System.Threading.ThreadPoolWorkQueue.Dispatch()

at System.Threading.PortableThreadPool.WorkerThread.WorkerThreadStart()

|

|

|

|

|

I don't understand where the GTK stuff is coming from. I'm also mildly freaked out to see a module called "Canberra" which was where I grew up. Weird flashbacks happening here...

cheers

Chris Maunder

|

|

|

|

|

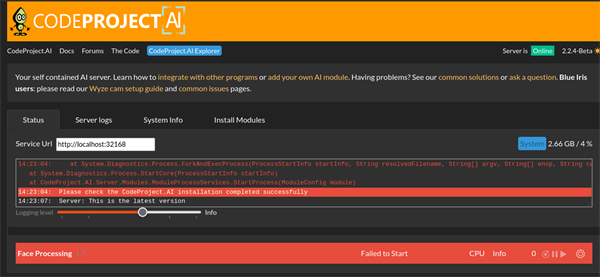

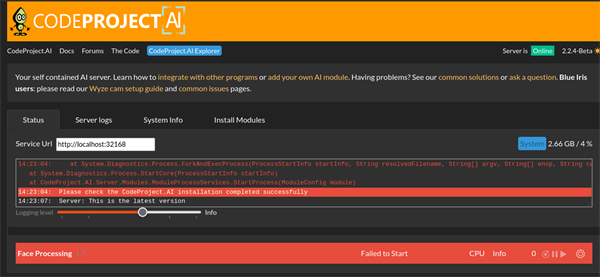

Changed Directory to /usr/bin/codeproject.ai-server-2.2.4/modules/FaceProcessing#

Ran ../../setup.sh

Took a while to install, but got Setup Complete.

Ran ../../start.sh

Got the following:

Setup complete

root@Ubuntu:/usr/bin/codeproject.ai-server-2.2.4/modules/FaceProcessing# ../../start.sh

Infor ** Operating System: Linux (Linux 6.2.0-34-generic #34~22.04.1-Ubuntu SMP PREEMPT_DYNAMIC Thu Sep 7 13:12:03 UTC 2)

Infor ** CPUs: Intel(R) Core(TM) i5-6500 CPU @ 3.20GHz (Intel)

Infor ** 1 CPU x 4 cores. 4 logical processors (x64)

Infor ** GPU: HD Graphics 530 (rev 06) (Intel Corporation)

Infor ** System RAM: 8 GiB

Infor ** Target: Linux

Infor ** BuildConfig: Release

Infor ** Execution Env: Native

Infor ** Runtime Env: Production

Infor ** .NET framework: .NET 7.0.12

Infor ** App DataDir: /etc/codeproject/ai

Infor Video adapter info:

Infor HD Graphics 530 (rev 06):

Infor Driver Version

Infor Video Processor

Infor *** STARTING CODEPROJECT.AI SERVER

Infor RUNTIMES_PATH = /usr/bin/codeproject.ai-server-2.2.4/runtimes

Infor PREINSTALLED_MODULES_PATH = /usr/bin/codeproject.ai-server-2.2.4/preinstalled-modules

Infor MODULES_PATH = /usr/bin/codeproject.ai-server-2.2.4/modules

Infor PYTHON_PATH = /bin/linux/%PYTHON_RUNTIME%/venv/bin/python3

Infor Data Dir = /etc/codeproject/ai

Infor ** Server version: 2.2.4-Beta

Server is listening on port 32168

Server is also listening on legacy port 5000

Trace ModuleRunner Start

Trace Starting Background AI Modules

Trace GetCommandByRuntime: Runtime=python38, Location=Shared

Trace Command: /usr/bin/codeproject.ai-server-2.2.4/runtimes/bin/linux/python38/venv/bin/python3

Debug

Debug

Debug Attempting to start FaceProcessing with /usr/bin/codeproject.ai-server-2.2.4/runtimes/bin/linux/python38/venv/bin/python3 "/usr/bin/codeproject.ai-server-2.2.4/modules/FaceProcessing/intelligencelayer/face.py"

Trace Starting /usr...untimes/bin/linux/python38/venv/bin/python3 "/usr.../FaceProcessing/intelligencelayer/face.py"

Infor

Infor ** Module 'Face Processing' 1.7 (ID: FaceProcessing)

Infor ** Module Path: /usr/bin/codeproject.ai-server-2.2.4/modules/FaceProcessing

Infor ** AutoStart: True

Infor ** Queue: faceprocessing_queue

Infor ** Platforms: windows,linux,linux-arm64,macos,macos-arm64

Infor ** GPU: Support enabled

Infor ** Parallelism: 0

Infor ** Accelerator:

Infor ** Half Precis.: enable

Infor ** Runtime: python38

Infor ** Runtime Loc: Shared

Infor ** FilePath: intelligencelayer\face.py

Infor ** Pre installed: False

Infor ** Start pause: 3 sec

Infor ** LogVerbosity:

Infor ** Valid: True

Infor ** Environment Variables

Infor ** APPDIR = %CURRENT_MODULE_PATH%\intelligencelayer

Infor ** DATA_DIR = %DATA_DIR%

Infor ** MODE = MEDIUM

Infor ** MODELS_DIR = %CURRENT_MODULE_PATH%\assets

Infor ** PROFILE = desktop_gpu

Infor ** USE_CUDA = False

Infor ** YOLOv5_AUTOINSTALL = false

Infor ** YOLOv5_VERBOSE = false

Infor

Error Error trying to start Face Processing (intelligencelayer\face.py)

Error An error occurred trying to start process '/usr/bin/codeproject.ai-server-2.2.4/runtimes/bin/linux/python38/venv/bin/python3' with working directory '/usr/bin/codeproject.ai-server-2.2.4/modules/FaceProcessing'. No such file or directory

Error at System.Diagnostics.Process.ForkAndExecProcess(ProcessStartInfo startInfo, String resolvedFilename, String[] argv, String[] envp, String cwd, Boolean setCredentials, UInt32 userId, UInt32 groupId, UInt32[] groups, Int32& stdinFd, Int32& stdoutFd, Int32& stderrFd, Boolean usesTerminal, Boolean throwOnNoExec)

at System.Diagnostics.Process.StartCore(ProcessStartInfo startInfo)

at CodeProject.AI.Server.Modules.ModuleProcessServices.StartProcess(ModuleConfig module)

Error *** Please check the CodeProject.AI installation completed successfully

mkdir: cannot create directory ‘/run/user/0’: Permission denied

Debug Current Version is 2.2.4-Beta

Infor Server: This is the latest version

Authorization required, but no authorization protocol specified

Error: cannot open display: :0

|

|

|

|

|

Hello,

in version 2.1.11 codeprojectai was working very well in docker with Google Coral.

However, in the latest version (2.2.4) it no longer works...

The error log follows:

20:23:29:Module 'ObjectDetection (Coral)' 1.5.1 (ID: ObjectDetectionCoral)

20:23:29:Module Path: /app/modules/ObjectDetectionCoral

20:23:29:AutoStart: True

20:23:29:Queue: objectdetection_queue

20:23:29:Platforms: windows,linux,linux-arm64,macos,macos-arm64

20:23:29:GPU: Support enabled

20:23:29:Parallelism: 1

20:23:29:Accelerator:

20:23:29:Half Precis.: enable

20:23:29:Runtime: python39

20:23:29:Runtime Loc: Local

20:23:29:FilePath: objectdetection_coral_adapter.py

20:23:29:Pre installed: False

20:23:29:Start pause: 1 sec

20:23:29:LogVerbosity:

20:23:29:Valid: True

20:23:29:Environment Variables

20:23:29:MODELS_DIR = %CURRENT_MODULE_PATH%/assets

20:23:29:MODEL_SIZE = Medium

20:23:29:

20:23:29:Started ObjectDetection (Coral) module

20:23:36:objectdetection_coral_adapter.py: INFO: Created TensorFlow Lite XNNPACK delegate for CPU.

20:23:36:objectdetection_coral_adapter.py: Edge TPU detected

20:23:36:objectdetection_coral_adapter.py: Timeout connecting to the server

20:23:58:ObjectDetection (Coral): [RuntimeError] : Traceback (most recent call last):

File "/app/modules/ObjectDetectionCoral/objectdetection_coral_adapter.py", line 88, in do_detection

result = do_detect(opts, img, score_threshold)

File "/app/modules/ObjectDetectionCoral/objectdetection_coral.py", line 191, in do_detect

interpreter.invoke()

File "/app/modules/ObjectDetectionCoral/bin/linux/python39/venv/lib/python3.9/site-packages/tflite_runtime/interpreter.py", line 941, in invoke

self._interpreter.Invoke()

RuntimeError: Encountered an unresolved custom op. Did you miss a custom op or delegate?Node number 11 (EdgeTpuDelegateForCustomOp) failed to invoke.

20:23:58:ObjectDetection (Coral): Rec'd request for ObjectDetection (Coral) command 'detect' (...57acc1) took 46ms

Logging level

Any solution?

|

|

|

|

|

I'm having the same issue was well. I'll update if I figure anything out.

|

|

|

|

|

I figured out a bit of a work around. I'm not sure if each step is completely necessary, but my time is limited right now, unfortunately.

1. Roll back to version CPA 2.1.11. (Delete any persistent volumes you have)

2. Install the ObjectDetection(Coral) module via the web interface. Start it up and verify it's not working. Then stop the module. At this point you should be on ObjectDetection(Coral) version 1.3.

3. Exec into the container.

Example:

docker exec -t -i codeprojectai /bin/bash

4: Delete the python3.9 venv:

rm -R /app/modules/ObjectDetectionCoral/bin/*

5.

cd /app/modules/ObjectDetectionCoral

6. Re-run the module setup:

../../setup.sh

7: Go back to the webui and start the Coral module and verify that it's working. If it's not, restart the container. Any more goofy business, be sure to clear your cache. It'll gitcha.

docker restart codeprojectai

8. Do a

docker image rm codeproject/ai-server:latest to delete the old image to ensure a clean slate. If all is working well at this point, let's upgrade to the latest version. Change your image from codeproject/ai-server:2.1.11 to codeproject/ai-server:latest

9. At this point our module will still be at version 1.3. Go to the "Install Modules" tab in the WebUIand update the ObjectDetection(Coral) Module.

10. At this point my module kept "losing contact." After restarting the container and clearing cache (Try CTRL+F5), I seem to be back in business.

These steps have worked for two separate instances that I'm running; both Debian 12- One is a VM, and the other is bare metal.

|

|

|

|

|

Hi,

thanks for the help!

I managed to make it work by following your steps!

|

|

|

|

|

That's fantastic!

|

|

|

|

|

Thanks. This worked for me too.

Also thanks for informing me about Docker exec command.

I guess I should read the Docker docs more carefully.

|

|

|

|

|

Thanks! This worked, except I had to run "sudo apt update" after installing :latest and before updating Coral from 1.3 to 1.5.1.

@chris-maunder Could you fix this so we don't have to do this workaround to get Coral 1.5.1 working on 2.2.4?

|

|

|

|

|

Already done. We're releasing 2.3.2 (Docker) to internal testers tonight

cheers

Chris Maunder

|

|

|

|

|

Hi, same here, ObjectDetection (Coral) 1.5.1 not working on Windows. Using Coral TPU USB. Very sad.

|

|

|

|

|

Every since I updated to v2.1.X, I have had nothing but issues. Is there a place I can download previous versions that I know worked well with BlueIris? Specifically 2.0.8?

|

|

|

|

|

|

Cool, thank you. I hope this fixes my issues.

|

|

|

|

|

I sincerely hope this works for you, but my recent experiences would indicate that it may not: Even when the main CopeProject website is fully functional (it has had some downtime and full outages in the last few days), attempting to install previous versions (2.1.8 in my case) always results in some failures when downloading/installing modules. The main installation claims "success", but I cannot get Object Detection (YOLOv5 .NET) to install and run (even with repeated uninstall/re-install attempts), as I always see the following:

Error in Install ObjectDetectionNet. Unknown response from server

So basically, while all of the previous download installation files are readily available, they don't actually work and result in a functional system. I am basically stuck on 2.1.8 on my production Blue Iris machine, as I cannot trust that 2.2.4 will actually work, nor do I have a (safety net) return path back to 2.1.8 should the newer version fail.

modified 1-Oct-23 23:00pm.

|

|

|

|

|

The downgrade fixed my issue perfectly. I will test updating again later but I am good for now

|

|

|

|

|

UPDATE: Issue solved

======================

I upgraded to CUDA 11.8 from 11.7 (per CodeProject.AI Server: AI the easy way.[^]) When I originally installed CodeProjectAI a couple of months ago the recommended CUDA version was 11.7. So this seemed to make a difference. I also uninstalled and reinstalled CodeProjectAI 2.2.4 and rebooted.

Current CUDA version recommendation:

install the CUDA Drivers (We recommend CUDA 11.8 if running Windows)

Install CUDA Toolkit 11.8.

Download and run our cuDNN install script to install cuDNN 8.9.4.

ORIGINAL POST:

=================

I has issues getting (YOLOv5 6.2) 1.6.1 installed. I get the same read timeout errors when attempting to install Object Detection (YOLOv5 6.2) 1.6.1. Is there an issue with the https://download.pytorch.org site? All the pulls keep timing out for me. The only way I can install pytorch is to run "pip3 install torch"

I was able to get (YOLOv5 6.2) 1.6.1 installed by doing the following manual steps:

Open command prompt with admin rights

run "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\Scripts\activate"

run cd "C:\Program Files\CodeProject\AI\modules\ObjectDetectionYolo"

## commented out torch install lines from requirements.windows.cuda.txt

run pip3 install torch

run pip install -r requirements.windows.cuda.txt

However, I can't get it to use the GPU. It will only run in CPU mode. The Object Detection (YOLOv5 .NET) 1.6 will run in GPU mode. Why won't (YOLOv5 6.2) 1.6.1 run in GPU mode?

Server version: 2.2.4-Beta

Operating System: Windows (Microsoft Windows 10.0.19045)

CPUs: Intel(R) Core(TM) i7-7700 CPU @ 3.60GHz (Intel)

1 CPU x 4 cores. 8 logical processors (x64)

GPU: NVIDIA GeForce GT 1030 (2 GiB) (NVIDIA)

Driver: 536.23 CUDA: 11.7.64 (max supported: 12.2) Compute: 6.1

System RAM: 16 GiB

Target: Windows

BuildConfig: Release

Execution Env: Native

Runtime Env: Production

.NET framework: .NET 7.0.5

Video adapter info:

NVIDIA GeForce GT 1030:

Driver Version 31.0.15.3623

Video Processor NVIDIA GeForce GT 1030

Intel(R) HD Graphics 630:

Driver Version 31.0.101.2111

Video Processor Intel(R) HD Graphics Family

System GPU info:

GPU 3D Usage 12%

GPU RAM Usage 414 MiB

Global Environment variables:

CPAI_APPROOTPATH = <root>

CPAI_PORT = 32168

Module 'Object Detection (YOLOv5 6.2)' 1.6.1 (ID: ObjectDetectionYolo)

Module Path: <root>\modules\ObjectDetectionYolo

AutoStart: True

Queue: objectdetection_queue

Platforms: all

GPU: Support enabled

Parallelism: 0

Accelerator:

Half Precis.: enable

Runtime: python37

Runtime Loc: Shared

FilePath: detect_adapter.py

Pre installed: False

Start pause: 1 sec

LogVerbosity:

Valid: True

Environment Variables

APPDIR = <root>\modules\ObjectDetectionYolo

CPAI_MODULE_SUPPORT_GPU = True

CUSTOM_MODELS_DIR = <root>\modules\ObjectDetectionYolo\custom-models

MODELS_DIR = <root>\modules\ObjectDetectionYolo\assets

MODEL_SIZE = Medium

USE_CUDA = True

YOLOv5_AUTOINSTALL = false

YOLOv5_VERBOSE = false

Started: 28 Sep 2023 3:31:19 PM Eastern Standard Time

LastSeen: 28 Sep 2023 4:01:08 PM Eastern Standard Time

Status: Stopped

Processed: 296

Provider:

CanUseGPU: False

HardwareType: CPU

Installation Log

2023-09-27 18:27:53: Installing CodeProject.AI Analysis Module

2023-09-27 18:27:53: ========================================================================

2023-09-27 18:27:53: CodeProject.AI Installer

2023-09-27 18:27:53: ========================================================================

2023-09-27 18:27:53: Checking GPU support

2023-09-27 18:27:53: CUDA Present...True

2023-09-27 18:27:53: Allowing GPU Support: Yes

2023-09-27 18:27:53: Allowing CUDA Support: Yes

2023-09-27 18:27:53: General CodeProject.AI setup

2023-09-27 18:27:53: Creating Directories...Done

2023-09-27 18:27:53: Processing Core SDK

2023-09-27 18:27:53: Installing module ObjectDetectionYolo 1.6.1

2023-09-27 18:27:53: Checking for python37 download...Present

2023-09-27 18:27:53: Creating Virtual Environment (Shared)...Python 3.7 Already present

2023-09-27 18:27:53: Enabling our Virtual Environment...Done

2023-09-27 18:27:53: Confirming we have Python 3.7...present

2023-09-27 18:27:53: CUDA version is 11.7

2023-09-27 18:27:54: Ensuring Python package manager (pip) is installed...Done

2023-09-27 18:27:55: Ensuring Python package manager (pip) is up to date...Done

2023-09-27 18:27:55: Choosing Python packages from requirements.windows.cuda.txt

2023-09-27 18:28:33: ERROR: Exception:

2023-09-27 18:28:33: Traceback (most recent call last):

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\urllib3\response.py", line 438, in _error_catcher

2023-09-27 18:28:33: yield

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\urllib3\response.py", line 561, in read

2023-09-27 18:28:33: data = self._fp_read(amt) if not fp_closed else b""

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\urllib3\response.py", line 519, in _fp_read

2023-09-27 18:28:33: data = self._fp.read(chunk_amt)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\cachecontrol\filewrapper.py", line 90, in read

2023-09-27 18:28:33: data = self.__fp.read(amt)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\lib\http\client.py", line 461, in read

2023-09-27 18:28:33: n = self.readinto(b)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\lib\http\client.py", line 505, in readinto

2023-09-27 18:28:33: n = self.fp.readinto(b)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\lib\socket.py", line 589, in readinto

2023-09-27 18:28:33: return self._sock.recv_into(b)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\lib\ssl.py", line 1071, in recv_into

2023-09-27 18:28:33: return self.read(nbytes, buffer)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\lib\ssl.py", line 929, in read

2023-09-27 18:28:33: return self._sslobj.read(len, buffer)

2023-09-27 18:28:33: socket.timeout: The read operation timed out

2023-09-27 18:28:33: During handling of the above exception, another exception occurred:

2023-09-27 18:28:33: Traceback (most recent call last):

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\cli\base_command.py", line 180, in exc_logging_wrapper

2023-09-27 18:28:33: status = run_func(*args)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\cli\req_command.py", line 248, in wrapper

2023-09-27 18:28:33: return func(self, options, args)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\commands\install.py", line 378, in run

2023-09-27 18:28:33: reqs, check_supported_wheels=not options.target_dir

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\resolver.py", line 93, in resolve

2023-09-27 18:28:33: collected.requirements, max_rounds=limit_how_complex_resolution_can_be

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\resolvelib\resolvers.py", line 546, in resolve

2023-09-27 18:28:33: state = resolution.resolve(requirements, max_rounds=max_rounds)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\resolvelib\resolvers.py", line 397, in resolve

2023-09-27 18:28:33: self._add_to_criteria(self.state.criteria, r, parent=None)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\resolvelib\resolvers.py", line 173, in _add_to_criteria

2023-09-27 18:28:33: if not criterion.candidates:

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\resolvelib\structs.py", line 156, in __bool__

2023-09-27 18:28:33: return bool(self._sequence)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\found_candidates.py", line 155, in __bool__

2023-09-27 18:28:33: return any(self)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\found_candidates.py", line 143, in

2023-09-27 18:28:33: return (c for c in iterator if id(c) not in self._incompatible_ids)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\found_candidates.py", line 47, in _iter_built

2023-09-27 18:28:33: candidate = func()

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\factory.py", line 211, in _make_candidate_from_link

2023-09-27 18:28:33: version=version,

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\candidates.py", line 299, in __init__

2023-09-27 18:28:33: version=version,

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\candidates.py", line 156, in __init__

2023-09-27 18:28:33: self.dist = self._prepare()

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\candidates.py", line 225, in _prepare

2023-09-27 18:28:33: dist = self._prepare_distribution()

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\candidates.py", line 304, in _prepare_distribution

2023-09-27 18:28:33: return preparer.prepare_linked_requirement(self._ireq, parallel_builds=True)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\operations\prepare.py", line 538, in prepare_linked_requirement

2023-09-27 18:28:33: return self._prepare_linked_requirement(req, parallel_builds)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\operations\prepare.py", line 615, in _prepare_linked_requirement

2023-09-27 18:28:33: hashes,

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\operations\prepare.py", line 170, in unpack_url

2023-09-27 18:28:33: hashes=hashes,

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\operations\prepare.py", line 107, in get_http_url

2023-09-27 18:28:33: from_path, content_type = download(link, temp_dir.path)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\network\download.py", line 147, in __call__

2023-09-27 18:28:33: for chunk in chunks:

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\network\utils.py", line 87, in response_chunks

2023-09-27 18:28:33: decode_content=False,

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\urllib3\response.py", line 622, in stream

2023-09-27 18:28:33: data = self.read(amt=amt, decode_content=decode_content)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\urllib3\response.py", line 587, in read

2023-09-27 18:28:33: raise IncompleteRead(self._fp_bytes_read, self.length_remaining)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\lib\contextlib.py", line 130, in __exit__

2023-09-27 18:28:33: self.gen.throw(type, value, traceback)

2023-09-27 18:28:33: File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\urllib3\response.py", line 443, in _error_catcher

2023-09-27 18:28:33: raise ReadTimeoutError(self._pool, None, "Read timed out.")

2023-09-27 18:28:33: pip._vendor.urllib3.exceptions.ReadTimeoutError: HTTPSConnectionPool(host='download.pytorch.org', port=443): Read timed out.

2023-09-27 18:28:33: Installing Packages into Virtual Environment...Success

2023-09-27 18:28:38: Downloading Standard YOLO models...Expanding...Done.

2023-09-27 18:28:46: Downloading Custom YOLO models...Expanding...Done.

2023-09-27 18:28:46: Installing Server SDK support:

2023-09-27 18:28:46: CUDA version is 11.7

2023-09-27 18:28:47: Ensuring Python package manager (pip) is installed...Done

2023-09-27 18:28:48: Ensuring Python package manager (pip) is up to date...Done

2023-09-27 18:28:48: Choosing Python packages from requirements.txt

2023-09-27 18:28:53: Installing Packages into Virtual Environment...Success

2023-09-27 18:28:53: Setup complete

Installer exited with code 0

modified 29-Sep-23 17:54pm.

|

|

|

|

|

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin